Today I want to researchED at South Hampstead High School in London. It was a very fruitful day. Can’t say I heard a lot of new things, I knew most things, either because of my ‘job’ or because I had already read and heard of it via the blogosphere. But it was also very important: speaking from my background as a former secondary maths and computer science teacher and now lecturer/researcher (with some involvement in teacher training as well) I th ink it’s vital that practitioners (practice) and researchers work together in partnership. There are many obstacles for this to happen – I imagine I might know about the obstacles from both sides, practitioners and researchers, because I have experienced and am experiencing both. These are two distinct cultures that need to ‘bridge’ the gap. For this, government needs to ‘invest’ and not think -like with the maths hubs- volunteering can cover this. But ok, enough about that. A kaleidoscope of sessions I visited (although I did tweet about some ‘abstracts’ I read but couldn’t visit).

ink it’s vital that practitioners (practice) and researchers work together in partnership. There are many obstacles for this to happen – I imagine I might know about the obstacles from both sides, practitioners and researchers, because I have experienced and am experiencing both. These are two distinct cultures that need to ‘bridge’ the gap. For this, government needs to ‘invest’ and not think -like with the maths hubs- volunteering can cover this. But ok, enough about that. A kaleidoscope of sessions I visited (although I did tweet about some ‘abstracts’ I read but couldn’t visit).

I started off with Lucy Crehan who reported on international comparisons and her experiences with visiting six countries to discover their education system. I liked this session a lot because I work quite a lot with international comparative but also with more qualitative data from, for example, the TIMSS video study. I try to combine both in the international network project ‘enGasia‘ in which England, Hong Kong and Japan collaborate to (i) compare geometry education in those countries, (ii) design some digital maths books for geometry, and (iii) test them both qualitatively through Lesson Study and more quantitatively through a quasi-experiment. Some of the work from John Jerrim was mentioned.

Then I finally got to see Pedro De Bruykere (slides here), whom I already knew to be a very engaging and funny speaker. He went through many of the myths in the book he wrote with Casper Hulshof and Paul Kirschner: Learning Styles, Learning pyramids, some TED talks (Sugata Mitra who featured in previous blogposts here and here, and Ken Robinson). I can recommend the book as a quick way to get up to speed to myths (and almost-myths). I liked how Pedro described how the section on ‘grade retention’ became more nuanced between the Dutch and English editions of the book because of the results of a new study.

Then back to international comparisons with Tom Oates. I already knew his report on textbooks and I agree that textbooks have to offer us a lot. But then again, I would think that: in the Netherlands maths (my subject) textbooks are used a lot and edited the proceedings of the International Conference on Mathematics Textbook Research and Development 2014. Tim’s talk covered quotes on international comparisons and unpicked the problems (fallacies, faulty arguments) with them. He had a measured conclusion:

After lunch I went to the #journalclub with Beth Greville-Giddings for some cookies. I had prepared by reading the article and making these annotations. The session explained the process of starting a journal club first and then we discussed the paper. One interesting moment was when people discussed the statistics in the paper. I agreed with comments that because we were dealing with a reputable journal the statistics probably were correct. But in my view there is another problem: statistical literacy. In this paper two things stood out for me (statistically, there were more like the definition of engagement): the term ‘significant’ and ‘variance explained’. With largescale data the sample size often is quite high, causing significance more quickly. Because of this reason ‘effect size’ is probably more appropriate. Secondly the statistics seemed to show that not much more extra variance is explained by adding engagement predictors. Any way, journal clubs to me seem like a worthwhile venture; might be good to forge partnerships with HE as well.

Crispin Weston then gave a lecture on how technology might revolutionise research. He framed this by first describing 7 problems and then showing how technology might ‘improve’ or ‘address’ them. It was an interesting approach which resulted in a matrix with ‘solved’ problems. Learning Analytics and standards (see my response to W3C priorities) had a prominent place. I’m a bit skeptical of it all will work. In the MC squared project we are implementing some Learning Analytics, including for creativity, and it’s bloody difficult.

Sri Pavar then talked about Cognitive Science. I think it’s good to summarise these principles. Principles like a memory model and relevant books (although some books referenced were not really about Cognitive Science) were presented. Cognitive Load Theory (CLT) had a prominent place. I couldn’t help tweeting some critical comments about CLT (a good summary here, a newer interesting blog on the measure used here). For example the ‘translation’ of research that a lower cognitive load is better: of course not, you wouldn’t learn anything. Or the often used measurement instrument:

Or the role of schemas: germane load was an (unfalsifiable) attempt at explaining schemas within the CLT framework but apparently some have abandoned it because of the unfalsifiable nature. But then what? And what does it add to existing information processing theories.

The final session was by Professor Rob Coe. He talked about several things pertaining to ‘what works’. He talked about Randomised Controlled Trials, logic, and took us back to last year’s ResearchEd, Dylan Wiliam’s talk.

I am with Coe here. Rob mentioned a little cited paper that sounded very interesting.

Tom Bennett finished the day. In the North Star it was great to meet a whole range of Twitterati, It was an interesting day and professionally I hope practitioners and researchers (Primary, Secondary Education and Higher Education) can grow towards each other:

Oh, and let me end on a contrarian note: some people have got to read up on all that cognitive psychology: most researchers are for more nuanced in their papers 😉

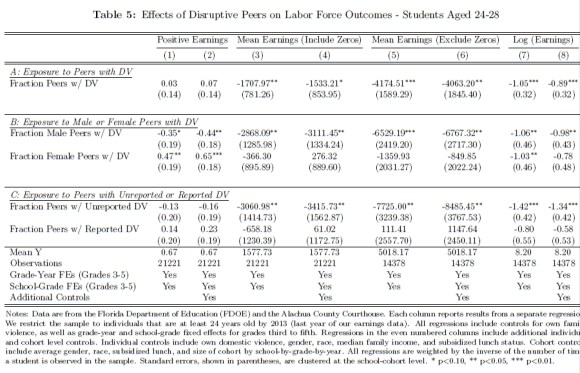

There are some interesting observations here. The abstract’s result is mentioned in the paper “Estimates across columns (3) through (8) in Panel A indicate that elementary school exposure to one additional disruptive student in a class of 25 reduces earnings by between 3 and 4 percent. All estimates are significant at the 10 percent level, and all but one is significant at the 5 percent level.” The fact economists would even want to use 10% (with such a large N) is already strange to me. Even 5% is tricky with those numbers. However, the main headline in the abstract can be confirmed. But have a look at panel C. It seems there is a difference between ‘reported’ and ‘unreported’ Domestic Violence. Actually, reported DV has a (non-significant) positive effect. Where was that in the abstract? Rather than a conclusion along the lines whether DV was reported or not, the conclusion only focuses on the negative effects of *unreported* DV. I think it would be more fair to make a case for better signalling and monitoring of DV, so that negative effects of unreported DV are countered; after all, there are no negative effects on peers when reported.

There are some interesting observations here. The abstract’s result is mentioned in the paper “Estimates across columns (3) through (8) in Panel A indicate that elementary school exposure to one additional disruptive student in a class of 25 reduces earnings by between 3 and 4 percent. All estimates are significant at the 10 percent level, and all but one is significant at the 5 percent level.” The fact economists would even want to use 10% (with such a large N) is already strange to me. Even 5% is tricky with those numbers. However, the main headline in the abstract can be confirmed. But have a look at panel C. It seems there is a difference between ‘reported’ and ‘unreported’ Domestic Violence. Actually, reported DV has a (non-significant) positive effect. Where was that in the abstract? Rather than a conclusion along the lines whether DV was reported or not, the conclusion only focuses on the negative effects of *unreported* DV. I think it would be more fair to make a case for better signalling and monitoring of DV, so that negative effects of unreported DV are countered; after all, there are no negative effects on peers when reported.