This is the English text of a blog that appeared on a Swedish site (kind translation by Sara Hjelm).

In efforts to debunk education myths there is a real danger that research is oversimplified. This is wholly understandable from the perspective of a teacher. Finding and understanding research is a hard and difficult process. The ‘wisdom of the crowds’ might help in this, but it often remains a challenge for all involved to translate complex research findings to concrete recommendations for teachers. It is certainly not the case that teacher simply can adopt and integrate these ideas in their daily practice. Furthermore, you can shout as often as you want that ‘according to research X should work’ but if it’s not working during teaching, you will make adjustments.

Why is it such a challenge for teachers to interpret research findings? As Howard-Jones (2014) indicates, this firstly might be because of cultural conditions, for example with regard to differences in terminology in language (e.g. see Lilienfeld et al., 2015; 2017). An example of this can be seen in the use of the words ‘significance’ and ‘reliability’. Both have a typical ‘daily use’ but also a specific statistical and assessment meaning. A second reason Howard-Jones mentions, is that counter-evidence might be difficult to access. A third element might be that claims simply are untestable, for example because they assume knowledge about cognitive processes, or even the brain, that are unknown to us (yet). Finally, an important factor we can’t rule out is bias. When we evaluate and scrutinise evidence, a range of emotional, developmental and cultural biases interact with emerging myths. One particularly important bias is ‘publication bias’, which might be one of the biggest challenges for academia in generak. Publication bias is sometimes called the ‘file drawer problem’ and refers to the situation what you read in research articles often are just the positive outcomes. If a study does not yield a ‘special’ finding, then unfortunately it is less likely to be published.

Because of these challenges, navigating your way through the research landscape is very time-consuming and requires a lot of research knowledge, for example on research designs, prior literature, statistical methods, key variables used and so forth. And even with this appropriate knowledge, understanding research still will take a lot of time. For a quick scan this might be 15 minutes or so, but for the full works you would have to look in detail at the instruments, the statistical methods or you would have to follow-up other articles referenced in a paper, often amounting to hours of works. This is time that busy practitioners haven’t got. Science is incremental ie we build on an existing body of knowledge, and every new study provides a little bit more insight in the issue at hand. One study most likely is not enough to either confirm or disprove a set of existing studies. A body of knowledge can be more readily formed through triangulation and looking at the same phenomenon from different perspectives:ten experimental studies might sit next to ten qualitative studies, economic papers might sit next to classroom studies.

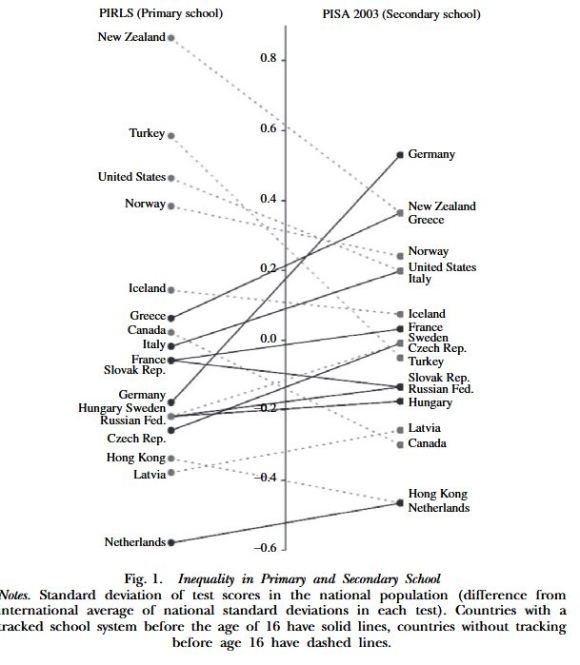

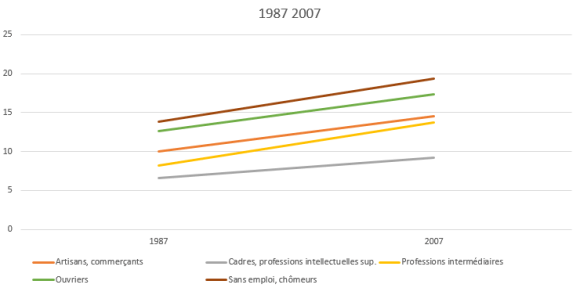

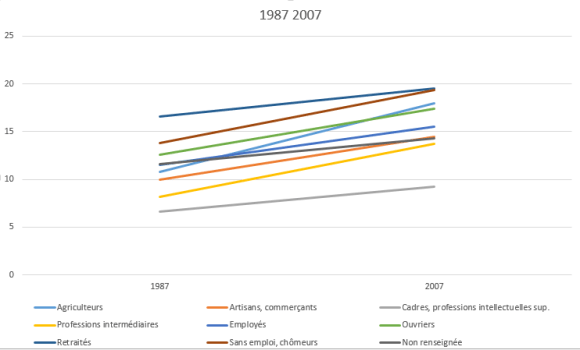

In my view, there are quite a lot of examples where there is a danger that simple conclusions might create new myths or misconceptions. Let me give two of them, which have been popular on social media. The first example is the work by E.D. Hirsch. I think his views can’t be seen separate from the US context. Hirsch is passionate about educational fairness, but the so-called GINI coefficient seems to indicate that systemic inequality is much larger in the US. Hirsch in my view also tends to disregard different types of knowledge: he is positive about ‘knowledge’ but quite negative about ‘skills’, for example. However, ‘skills’ could simply be seen as ‘practical knowledge’ (e.g. see Ohlsson, 2011), emphasising the important role of knowledge, but still acknowledging you need more than ‘declarative knowledge’ to be ‘skilled’. In his last book, Hirsch also contends that a student-centred curriculum increased educational inequality in France, while more recent data and a more comprehensive analysis, seems to indicate this is not the case (see https://bokhove.net/2017/04/26/the-case-of-france/). A second example might be the currently very popular Cognitive Load Theory by Professor John Sweller. Not everyone seems to realise that this theory does not include a view on motivation. Sweller is open about this and that’s fine of course. It does, however, not mean that it is irrelevant. Research needs to indicate what its scope is, and what it does or does not include, and subsequent conclusions need to be commensurate with the research questions and scope. This precision in wording is important, but inevitably suffers from word count restrictions, whether in articles, blogs or 280 character tweets. There is a tension between brevity, clarity and doing justice to the complex nature of the education context.

Ideally, I think, we can help each other out. We need practitioners, we need academics, we need senior leadership, we need exam boards, we need subject specialists, to all work together. We also need improved incentives to build these bridges. I am hopeful that, if we do that, we can genuinely make a positive contribution to our schools.

Dr. Christian Bokhove was a secondary maths and computer science teacher in the Netherlands from

1998 to 2012 and now is a lecturer in mathematics education at the University of Southampton. He tweets as @cbokhove and has a blog he should write more for at www.bokhove.net

References

Howard-Jones, P. (2014). Neuroscience and education: myths and messages. Nature Reviews Neuroscience, 15(12), 817-824.

Lilienfeld, S.O., Pydych, A.L., Lynn, S.J., Latzman, R.D., & Waldman, I.D. (2017). 50 Differences that make a difference: A compendium of frequently confused term pairs in psychology. Frontiers in Psychology, https://doi.org/10.3389/feduc.2017.00037

Lilienfeld, S.O., Sauvigné, K.C., Lynn, S.J., Cautin, R.L., Latzman, R.D., & Waldman, I.D. (2015). Fifty psychological and psychiatric terms to avoid: a list of inaccurate, misleading, misused, ambiguous, and logically confused words and phrases. Frontiers in Psychology, https://doi.org/10.3389/fpsyg.2015.01100

Ohlsson, S. (2011). Deep Learning: How the Mind Overrides Experience. Cambridge University Press: New York.

Sometimes I just get carried away a bit. I managed to get an early copy of Daisy Christodoulou’s new book on assessment called

Sometimes I just get carried away a bit. I managed to get an early copy of Daisy Christodoulou’s new book on assessment called